Just like making nachos at home is cheaper than getting them delivered from a restaurant, Microsoft hopes making its own computer chips will help rein in its AI costs.

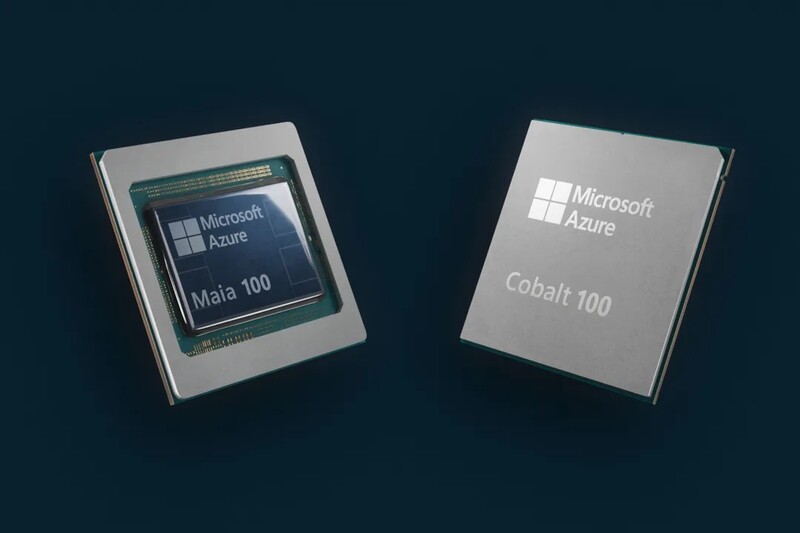

What happened: Among the many AI-related announcements Microsoft made at its Ignite conference this week was a pair of new chips it will build itself, geared towards reducing the costs of delivering its sprawling suite of services.

- Maia is a chip specifically geared towards cloud-based AI tasks, including its $30-a-month Copilot AI tool and the workloads it powers for OpenAI.

- Cobalt is a CPU meant for more general cloud computing tasks, and is already being tested on Microsoft Teams and its SQL database server. The company plans to sell access to this chip and compete with Amazon’s Graviton, which has over 50,000 customers.

Why it matters: As demand for AI picks up, so does the cost of operating it, much of which comes from hardware. Microsoft won’t stop buying chips from other companies, but having its own means it’s not subject to the ups and downs of the supply chain.

- In the spring, for example, Nvidia’s H100 AI specialist chip was selling for over $40,000.

- Microsoft also plans tobring costs down by routing as many of its AI products as possible through a common set of foundational models, which Maia is optimized for.

Zoom out: It’s not just tech giants that are grappling with a big AI bill — their clients are too, with AI advancements expected to drive 20.4% growth in the cloud computing market next year.